The notification issued by the Ministry of Electronics and Information Technology (MeitY) on February 10, 2026, marks the definitive end of the “Wild West” era for generative artificial intelligence in India. By amending the IT Rules, 2021, the government has signalled that the era of voluntary guidelines is over; the era of “Hard Law” has arrived.

Compliance Checklist for SSMIs (Instagram, X, YouTube)

| Requirement | Deadline | Penalty for Non-Compliance |

| SGI Labeling | Immediate upon Upload | Loss of Section 79 Safe Harbour; Direct Liability |

| Lawful Takedowns | Within 3 Hours of Notice | Potential Criminal Prosecution of Senior Officers |

| Sensitive Removal | Within 2 Hours of Knowledge | Mandatory Reporting; Loss of Legal Protections |

| Metadata Provenance | Permanent throughout Lifecycle | Deemed failure of due diligence; risk of audit |

| User AI Declarations | Prior to Publication | Account Suspension; Preservation of Evidence |

| Verification Tools | Real-time Deployment | Systemic non-compliance designation |

The End of “Soft Advisories”

India is no longer asking nicely. The notification issued on February 10, 2026, which becomes effective on February 20, represents a foundational shift from a passive hosting model to proactive policing. For Big Tech, this is a 10-day sprint to avoid a total loss of legal immunity. The “Soft Advisory” era, characterized by periodic warnings from MeitY, has been supplanted by a rigid statutory framework where missing a three-hour clock could end a platform’s status as a “neutral conduit” under Section 79. At the heart of this regulatory overhaul is the legal recognition of Synthetically Generated Information (SGI)—a broad definition covering deepfakes, voice clones, and any algorithmically altered media that appears indistinguishable from reality. In the world’s most aggressive removal timeline, India has effectively institutionalized a regime of algorithmic accountability, forcing platforms to move at the speed of the code rather than the speed of human review.

SGI and the 3-Hour Clock

The amended Rule 2(1)(wa) defines SGI as audio, visual, or audio-visual information that is “artificially or algorithmically created, generated, modified, or altered” in a manner that makes it appear true. The headline provision is the compression of takedown timelines from 36 hours to a mere three hours for content identified by court or government orders. For sensitive categories, such as non-consensual deepfake nudity or images depicting a person in sexual acts, the window is even tighter: platforms must act within two hours. This acknowledgement of the “virality gap” recognizes that in the age of AI, a few hours of exposure can cause irreversible reputational harm or trigger massive public disorder.

To prevent the criminalization of ordinary digital activity, the rules carve out critical exceptions. Routine or good-faith editing—such as colour correction, noise reduction, and compression—is exempt provided it does not materially misrepresent the substance of the information. Similarly, the mandate does not apply to AI used for accessibility enhancements, academic research, or conceptual content in documents like corporate presentations. These exclusions ensure that while malicious deepfakes face the three-hour clock, basic smartphone touch-ups and educational materials remain protected.

The Techno-Legal Shift

These rules are the operationalization of the “techno-legal” philosophy championed by IT Minister Ashwini Vaishnaw. Vaishnaw argues that the complexities of generative AI cannot be managed by standalone laws alone; the law must instead mandate the use of technical tools to authenticate reality. This strategy shifts the burden of proof from the state to the platform. Under Rule 4(1A), Significant Social Media Intermediaries (SSMIs) must require users to declare AI use during upload and deploy “reasonable and appropriate technical measures” to verify the accuracy of those declarations. Platforms are now legally required to act as technical verifiers of reality, or face immediate liability for their users’ deception.

Prominent Labelling

The final notification reflects a tactical retreat by the government regarding visual disclosure standards. An October 2025 draft had proposed a rigid “10% screen area watermark” for all AI-generated visual content and audio markers that play during the initial 10% of a clip. This proposal met with intense pushback from the Internet and Mobile Association of India (IAMAI), which argued that such prescriptive requirements would degrade user experience to the point of making content unappealing. Consequently, the government dropped the specific 10% threshold in favor of a “prominent labeling” requirement. While this appears to be a concession, it actually increases legal jeopardy by replacing a numerical rule with a subjective standard. Platforms must now interpret what “prominent” means under the constant threat that an official might find their labels “insufficient,” thereby triggering the loss of Safe Harbour.

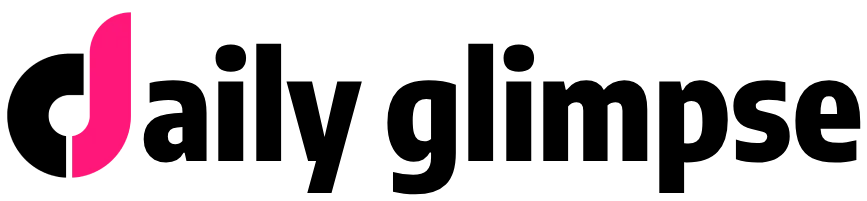

The Metadata Trap and Safe Harbour

Perhaps the most technically ambitious component is the mandate for Metadata Provenance. Rule 3(3)(b) requires intermediaries to embed “permanent metadata” or unique identifiers to ensure the traceability of the computer resource used to generate the SGI. Privacy advocates warn this “digital fingerprint” could serve as a backdoor for mass surveillance, ignoring the principles of necessity established in K.S. Puttaswamy v. Union of India. The ultimate leverage is the Section 79 Safe Harbour threat: if a platform knowingly permits unlabelled SGI or fails the 3-hour removal window, it loses its immunity and its CEO can be personally sued as the publisher of the illegal content.

The Implementation Nightmare

How will YouTube, Meta, and X build real-time auto-detection tools by next week? For Big Tech, the February 20 deadline is an operational emergency. Building automated systems that can verify user declarations in real-time is a challenge of unprecedented scale, particularly for regional Indian platforms that lack the multi-billion-dollar R&D budgets of Silicon Valley. These systems must be comprehensive enough to catch state-sponsored deepfakes while avoiding false positives that suppress legitimate satire—a technical balance that experts say is currently impossible to achieve in a three-hour window. Furthermore, the requirement to preserve metadata across services requires a level of industry standardization that is still in its infancy. Forced into a binary choice between near-instant removal and criminal liability, platforms are likely to adopt a “moderation-first, review-later” mindset that risks massive over-censorship of lawful speech.

Conclusion

India is the first major democracy to enforce such strict traceability and removal mandates on AI. The “India Model” signals a new era of digital statecraft where authenticity should be the default, and anonymity is no longer a shield for deception. Platforms that fail to synchronize their clocks to this new three-hour reality will find themselves navigating a compliance nightmare where the cost of a single unlabelled video could be the personal prosecution of their leadership.

- अजीत कुमार: प्रेरणादायक सफर और वैश्विक उपलब्धियों का महाकाव्य

भारतीय सिनेमा के परिदृश्य में कुछ ऐसे व्यक्तित्व होते हैं जो पर्दे की चकाचौंध से परे जाकर समाज और संस्कृति पर अपनी अमिट छाप छोड़ते हैं। दक्षिण भारतीय सिनेमा के … Read more

भारतीय सिनेमा के परिदृश्य में कुछ ऐसे व्यक्तित्व होते हैं जो पर्दे की चकाचौंध से परे जाकर समाज और संस्कृति पर अपनी अमिट छाप छोड़ते हैं। दक्षिण भारतीय सिनेमा के … Read more - Coachella 2026: Highlights and History-Making Moments You Missed!

The 25th anniversary of the Coachella Valley Music and Arts Festival just wrapped up, and it was absolutely legendary! From April 10–12 and April 17–19, 2026, the Empire Polo Club … Read more

The 25th anniversary of the Coachella Valley Music and Arts Festival just wrapped up, and it was absolutely legendary! From April 10–12 and April 17–19, 2026, the Empire Polo Club … Read more - वैभव सूर्यवंशी का टीम इंडिया में चयन हो चुका है?

भारतीय क्रिकेट के इतिहास में कुछ क्षण ऐसे आते हैं जो केवल खेल के आंकड़ों को नहीं बदलते, बल्कि पूरे देश की खेल भावना और भविष्य की दिशा को पुनर्परिभाषित … Read more

भारतीय क्रिकेट के इतिहास में कुछ क्षण ऐसे आते हैं जो केवल खेल के आंकड़ों को नहीं बदलते, बल्कि पूरे देश की खेल भावना और भविष्य की दिशा को पुनर्परिभाषित … Read more - US-Iran War Alert: Massive Stock Market and Sensex Crash

If you opened your trading app this Monday and felt a knot in your stomach, you aren’t alone. The Indian stock market is currently going through one of its toughest … Read more

If you opened your trading app this Monday and felt a knot in your stomach, you aren’t alone. The Indian stock market is currently going through one of its toughest … Read more - IPL 2026: 10 टीमें, 10 मालिक और अरबों की वैल्यू

साल 2026 भारतीय प्रीमियर लीग (IPL) के लिए एक ऐतिहासिक मोड़ साबित हुआ है। आज IPL सिर्फ क्रिकेट का एक टूर्नामेंट नहीं रह गया है, बल्कि यह दुनिया का सबसे … Read more

साल 2026 भारतीय प्रीमियर लीग (IPL) के लिए एक ऐतिहासिक मोड़ साबित हुआ है। आज IPL सिर्फ क्रिकेट का एक टूर्नामेंट नहीं रह गया है, बल्कि यह दुनिया का सबसे … Read more